IB Scheduling: Go + Next.js Booking Platform

Portfolio booking engine: Supabase Auth + Postgres, Go API (chi, pgx, OIDC JWT) on Railway, Next.js on Vercel, Prometheus/Grafana for metrics - race-safe reservations and full telemetry.

Architecture at a glance

The summary above names the pieces; here is how work is divided: Next.js (TypeScript) owns the product UI and browser session; Go owns booking rules, JWT verification against Supabase's OIDC issuer, and Postgres access via pgx—nothing authoritative runs in the client.

- Identity: Supabase Auth issues JWTs; Next keeps the session; Go verifies tokens with OIDC (JWKS).

- Data: Single Postgres (Supabase). Go connects with

DATABASE_URLusing a service / pooler role - never exposed in the browser. - Deploy pattern: Next on Vercel, Go API on Railway (long-lived), CORS restricted to the web origin.

The Go service never sees the Supabase anon key - only the user's access_token as a Bearer JWT, verified against the Auth issuer.

System diagram

How the browser, Next.js, Supabase (Auth + Postgres), and the Go API exchange requests. Observability shows metrics scrape/query (Prometheus/Grafana), not centralized logs.

Deployment diagram

How Supabase, Vercel, and Railway services connect in production.

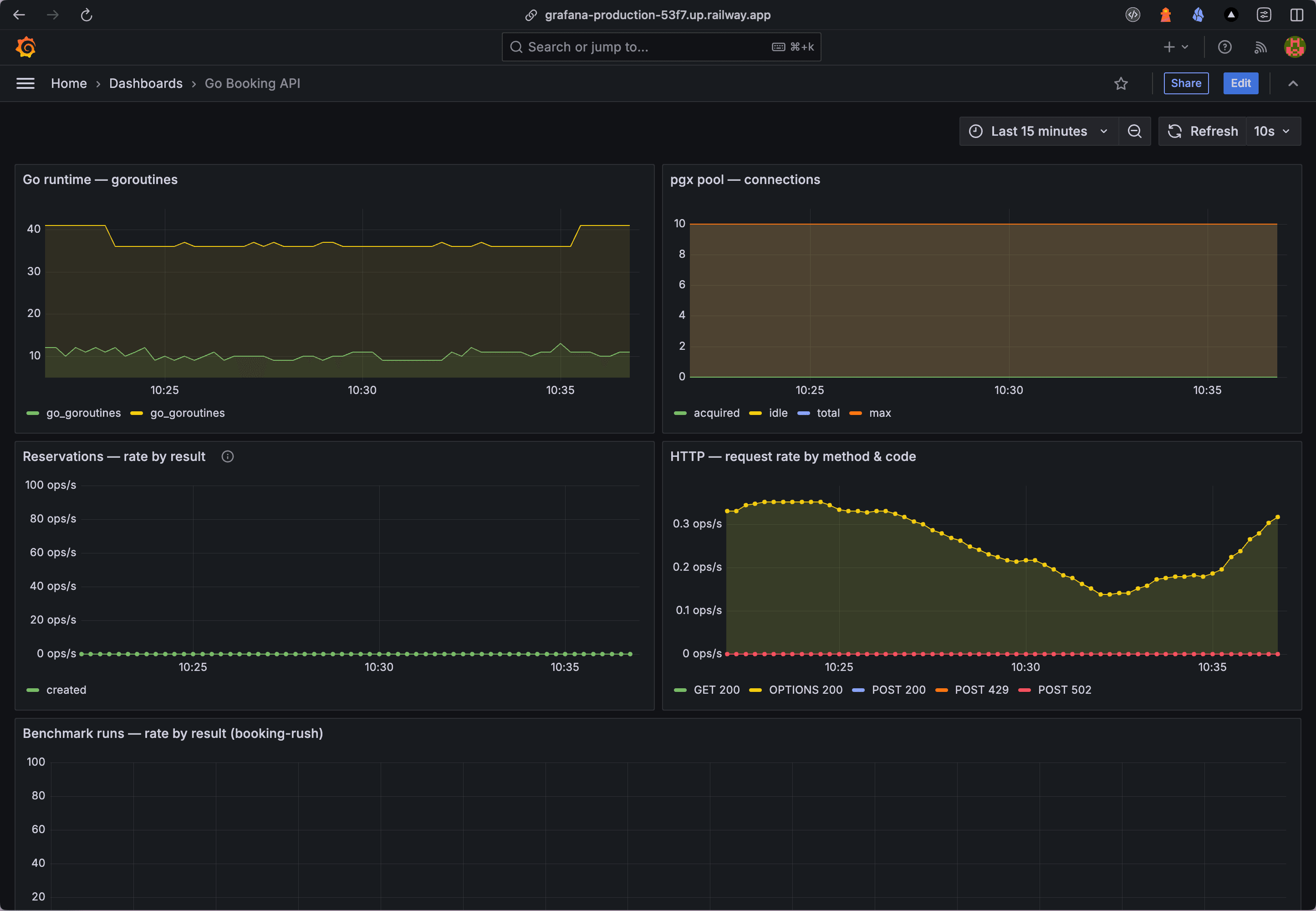

Telemetry and monitoring

The stack exposes a full metrics path: the Go API serves Prometheus text at /metrics; Prometheus scrapes and stores time-series; Grafana dashboards query Prometheus (e.g. PromQL). This is the operational layer for runtime and app health.

- Grafana (Railway) - dashboards and queries

- Prometheus (Railway) - scrape targets and TSDB

- Go API /metrics - raw Prometheus exposition from the booking service

Go booking API dashboard (Grafana)

Go API surface

Public vs JWT-protected routes are mounted in apps/api/cmd/server/main.go. Protected handlers expect Authorization: Bearer <supabase access_token>.

| Method | Path | Auth |

|---|---|---|

| GET | /health | None |

| GET | /api/v1/db-status | Bearer |

| GET | /api/v1/availability | Bearer |

| GET | /api/v1/reservations | Bearer |

| POST | /api/v1/reservations | Bearer |

| POST | /api/v1/slots | Bearer |

| POST | /api/v1/benchmark/booking-rush | Bearer |

| POST | /api/v1/mimic/notification/email | Bearer |

| POST | /api/v1/mimic/notification/whatsapp | Bearer |

Database invariants

public.resources- bookable entitypublic.slots-UNIQUE (resource_id, starts_at)- discrete windowspublic.reservations-UNIQUE (slot_id)- at most one confirmed row per slot (ON CONFLICTtarget)reservations.user_idreferencesauth.users(id)- aligns with JWTsub

Booking mechanics (race-safe)

POST /api/v1/reservations with a body { "slot_id": "uuid" } runs in a transaction: lock the slot row, insert reservation, commit. The unique constraint on slot_id plus FOR UPDATE serializes competing bookings; duplicate inserts return 409.

GET /api/v1/availability returns open slots (no confirmed reservation) via LEFT JOIN; the UI sends the Supabase access token on every call through a small fetch wrapper.

Source and live demo

Full source and deployment notes live in the repo sibtihaj/go-booking-system. Try the hosted app at go-booking-system.vercel.app and the Architecture pages for interactive diagrams.

Syed Ibtihaj

Design & Code by Syed Ibtihaj

Actively maintaining this site and pushing new work to GitHub as it ships.

© 2026 — Built with Next.js 16